Cerebras Systems is heading to Wall Street with something no AI chip startup has brought to an IPO before: a contract with OpenAI reportedly valued at more than $10 billion. That single deal, covering inference workloads where speed matters more than raw training throughput, anchors the most credible challenge to Nvidia’s dominance that the semiconductor market has produced in a decade. The Sunnyvale-based company has filed for an initial public offering after a previous attempt was delayed amid federal scrutiny of its foreign investors — and it returns with dramatically improved financials and a second anchor customer in Amazon Web Services.

A filing submitted to the Securities and Exchange Commission sets the stage for one of the most closely watched AI hardware debuts since the generative AI boom began. No fundraising target has been disclosed.

A Second Attempt, on Steadier Ground

Cerebras’s path to Wall Street has been anything but smooth. The company filed its S-1 registration statement this month, following a first IPO attempt that was held up by a federal review of an investment from an Abu Dhabi-based investor and eventually withdrawn. The regulatory cloud has lifted. What remains is a company with dramatically improved financials and a set of customer contracts that were implausible when the earlier filing went cold.

According to reporting from TechCrunch, the company posted $237.8 million in GAAP net income for 2025, although on a non-GAAP basis that figure reverses to a $75.7 million loss once certain one-time items are stripped out. The accounting gap matters for investors trying to gauge whether Cerebras has reached durable profitability or is riding a wave of deferred gains.

The OpenAI and AWS Anchors

Two customer relationships define the IPO narrative. The OpenAI arrangement, reportedly valued at more than $10 billion, covers inference workloads where speed matters more than raw training throughput. The second is an agreement with Amazon Web Services to make Cerebras chips available through Amazon’s infrastructure — a remarkable concession from a hyperscaler that typically prefers to design its own silicon.

CEO Andrew Feldman has been unusually blunt about what these deals represent. In a Wall Street Journal interview, Feldman described how Cerebras won business from OpenAI in direct competition with Nvidia, focusing on fast inference workloads. Feldman has also touted Cerebras’s performance advantages in AI workloads.

Why Inference Became the Battleground

For most of the AI boom, Nvidia’s grip on training workloads looked unassailable. The newer fight is over inference — the computationally cheaper but far more frequent task of running a trained model to answer a query, write code, or generate an image. Inference is what users actually experience. It is also where energy costs and latency compound across billions of daily interactions.

That shift has opened space for architectures built differently from general-purpose GPUs. Cerebras manufactures wafer-scale chips the size of dinner plates, an approach that trades conventional yield economics for extraordinary on-chip bandwidth. Ars Technica has documented how OpenAI has turned to Cerebras specifically for coding workloads where response speed directly affects developer productivity.

Nvidia has noticed. According to Techzine Global, Nvidia signed a $20 billion licensing deal with Groq in December and is developing inference-specific capabilities. OpenAI is working with multiple silicon suppliers — hedging aggressively across architectures rather than committing to one.

The Capital Stack Behind the Filing

Cerebras enters public markets with a private valuation that reflects the inference race’s intensity. The company has raised substantial private funding, including a $1 billion Series H in February at a $23 billion valuation, according to the Wall Street Journal. That funding pace signals that private markets priced Cerebras as a strategic hedge against Nvidia’s dominance well before the IPO filing.

The company’s recent revenue performance demonstrates strong market traction, though investors will need to weigh growth against profitability metrics carefully.

What the Filing Reveals About the Broader Chip Market

The Cerebras IPO arrives at a moment when the economics of AI compute are being rewritten. Training a frontier model is still a capital project measured in hundreds of millions of dollars, but inference is becoming a utility — priced per token, consumed continuously, and increasingly dominated by whichever architecture delivers the lowest latency per watt.

That reframing has consequences beyond Cerebras. Google’s TPU program, AMD’s Instinct GPU line, Amazon’s Trainium and Inferentia chips, and Groq’s language-processing units are all chasing the same structural opportunity. Nvidia’s response — developing dedicated inference silicon — confirms the company itself sees the threat as real.

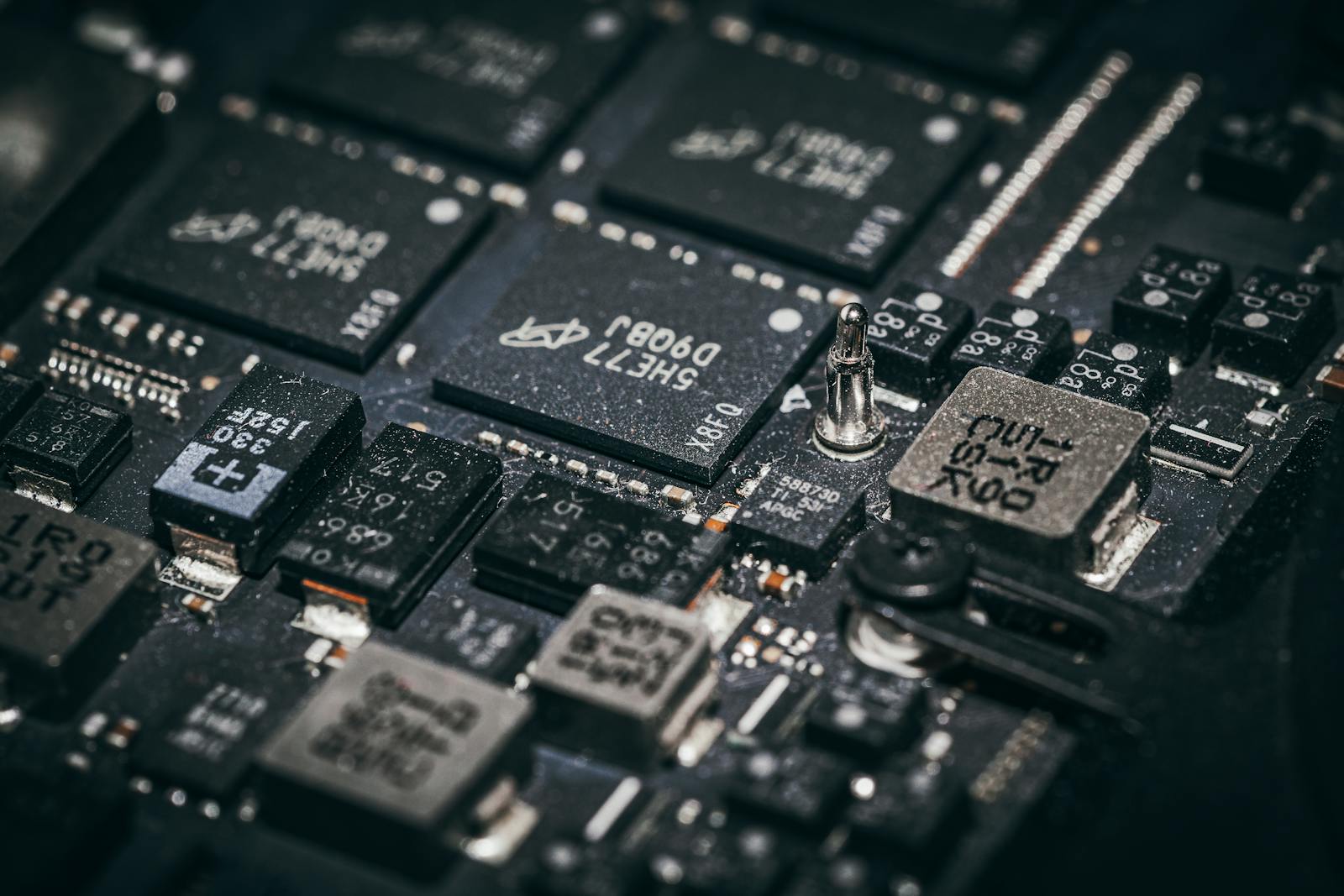

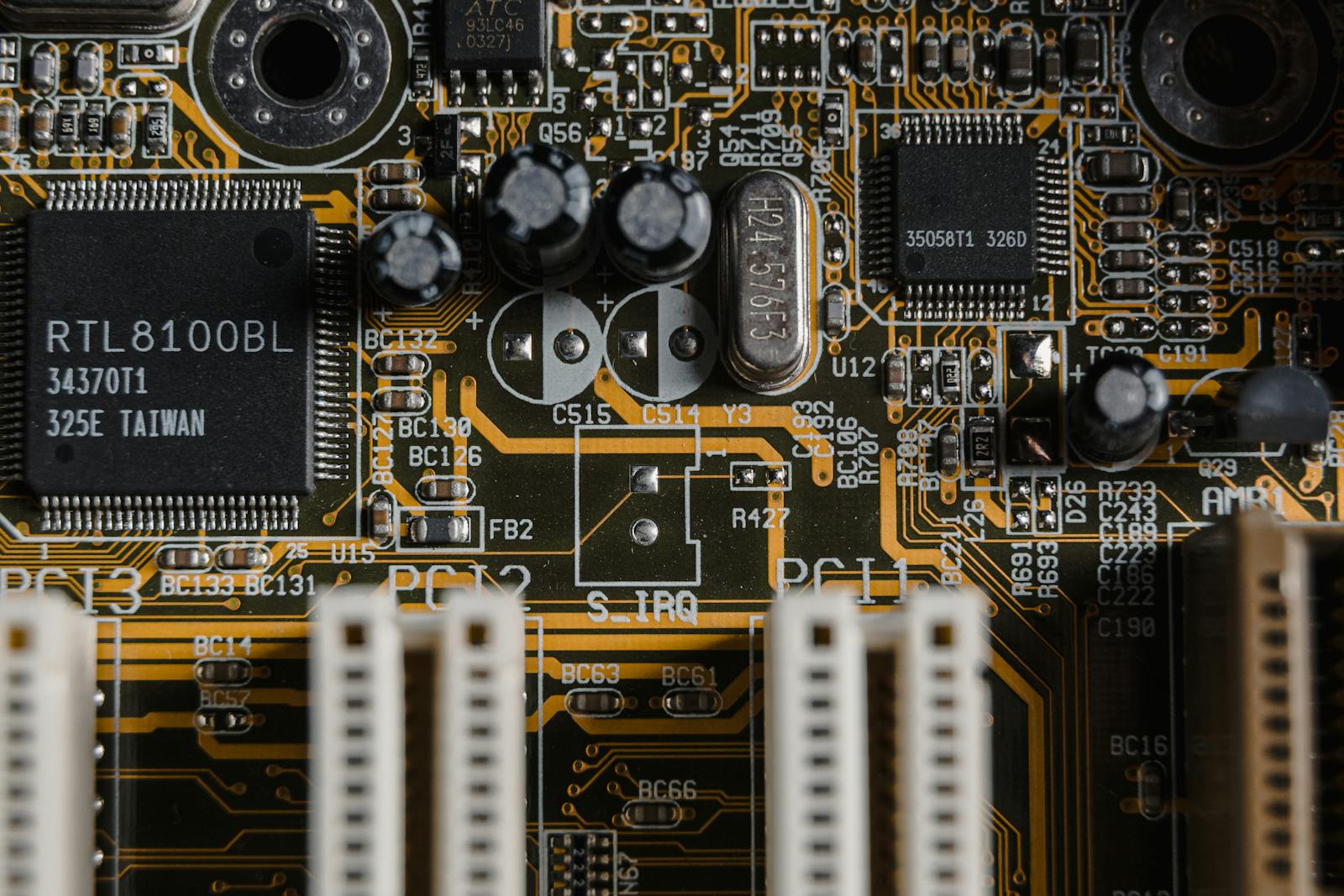

The larger question is whether specialized architectures can sustain performance leadership as model designs evolve. Inference workloads that favor Cerebras today may favor a different topology in two years. The history of semiconductors is littered with companies that won a specific benchmark and lost the next generation. For a fuller view of how the physical limits of silicon are reshaping these bets, our earlier analysis of chip density and semiconductor scaling remains relevant.

The Verdict: Can Cerebras Dethrone Nvidia?

The regulatory review that derailed the earlier filing was not an isolated event. It was part of a broader tightening of US government scrutiny over foreign capital in strategic semiconductor firms, particularly where Gulf state money intersects with AI hardware. Cerebras has structured its current cap table and customer relationships to avoid a repeat. The company’s disclosures on ownership and export compliance will be among the most carefully read sections of the S-1.

Cerebras will not dethrone Nvidia across the full AI compute stack — no single company will. But that is not what the IPO is really asking. The question is whether Cerebras can own a profitable, defensible slice of the inference market large enough to justify a $23 billion valuation and grow from there. The evidence says yes, with caveats. A $10 billion-plus OpenAI contract and an AWS distribution deal are not speculative signals — they are the strongest validation any Nvidia challenger has produced. The concentration risk is real: if OpenAI shifts architectures or Nvidia’s inference silicon closes the latency gap, Cerebras’s moat narrows fast. But right now, Cerebras has built the rarest thing in AI hardware — a company with real customers, real revenue, and a real technical thesis about what inference should look like — and it is forcing Nvidia to respond on someone else’s terms.

Three data points will determine whether the market agrees. The first is the final pricing relative to the $23 billion private mark. The second is the disclosed concentration of revenue — how much comes from OpenAI and AWS, and what the contract duration looks like. The third is any language about Nvidia’s evolving inference capabilities, which could compress margins across the specialized-silicon category if they deliver on early claims. The public market will decide whether that story is worth $23 billion, more, or considerably less.

Photo by Jakub Pabis on Pexels